Microsoft’s Copilot for Microsoft 365 landing everywhere except where our missions live is the most telling development of the quarter. PubSecAI’s readout is clear: GCC can move under OMB M-24-10 guardrails; GCC High and DoD—aligned to IL4 and IL5—cannot, and Copilot isn’t listed as a separate FedRAMP-authorized service on the Marketplace. Microsoft says Copilot rides existing M365 controls and doesn’t train on tenant data. All true, and all beside the point for the IC today.

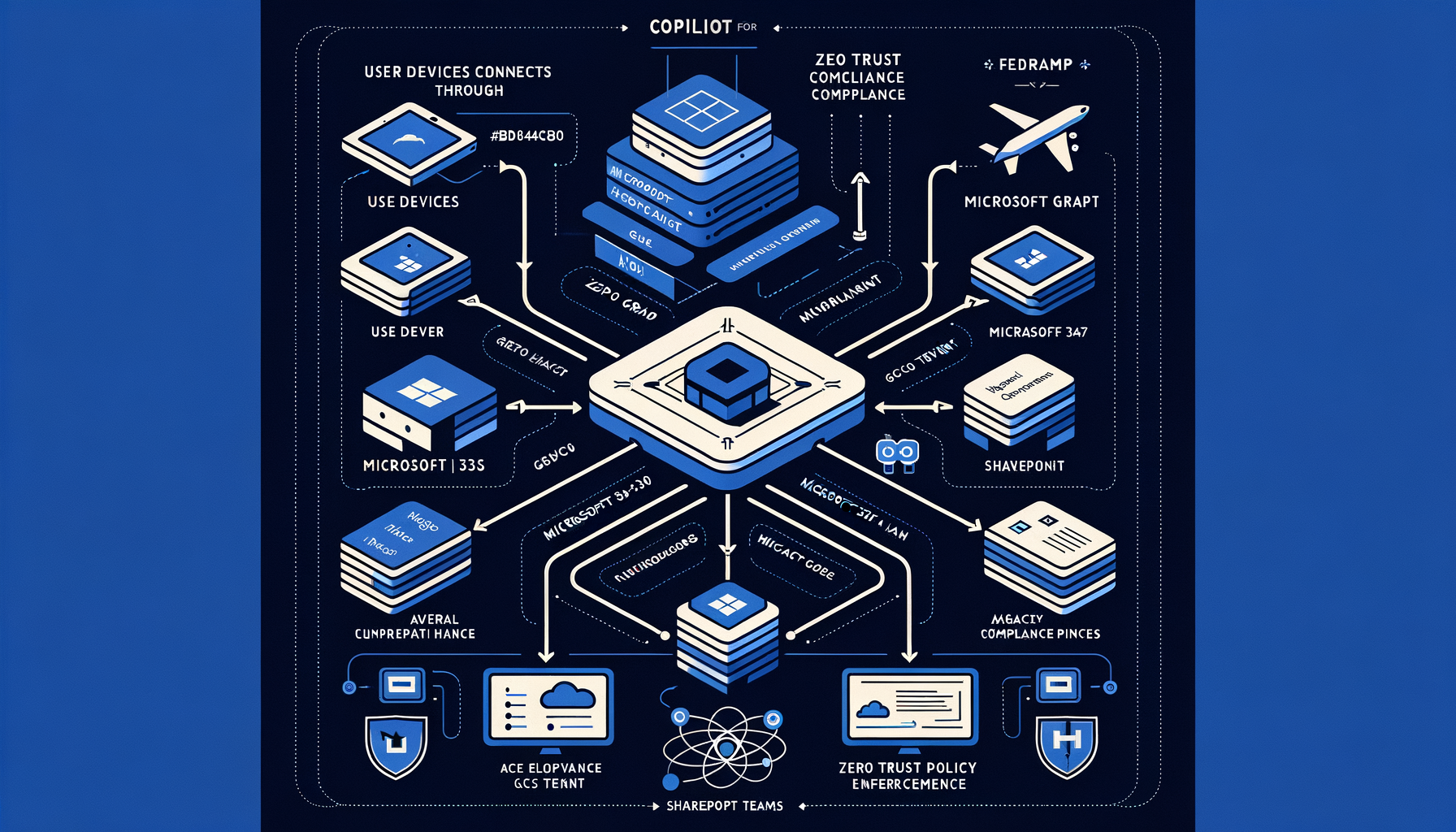

This isn’t a delay; it’s a design signal. Copilot is a Graph-first agent. It amplifies whatever your tenant permissions, labels, and data residues already allow. When that agent isn’t available at IL4/IL5, the smart move isn’t to wait—it’s to use the gap to harden the data and policy planes so that when an agent does arrive, its blast radius is bounded.

Three implications I don’t see discussed enough:

Authorization boundaries matter more than features. If Copilot isn’t independently enumerated on the FedRAMP Marketplace, your Authorizing Official will ask which SSP boundary covers which behaviors. Assume marketing pages are insufficient. Insist on boundary clarity, LOAs, and addenda before you flip any AI capability on—now or later.

Treat the LLM as a new client on your Zero Trust fabric. Copilot, or any agent, is a transitive access multiplier to Graph. Least-privilege grounding isn’t a slogan; it’s scoping Graph access, collapsing overbroad SharePoint inheritance, and stripping stale entitlements before an agent surfaces “dark data” by design.

The model plane is separable from the data plane. Whether you use Microsoft’s assistant later or can’t use it at all in classified enclaves, the pattern is the same: retrieval over your governed content with auditable policy enforcement. You can build and ATO that pattern inside your enclave today, and you probably should.

For IC practitioners, the work is concrete:

Get your house in order in GCC/GCC High regardless of Copilot availability. Fix sensitivity labels, tune DLP to prompts and completions, and rationalize Graph permissions. Copilot honors existing controls—so weaknesses will be honored too.

Build enclave-native “Copilot patterns” where Copilot can’t go. Retrieval-augmented generation against your M365 corpus and mission data, with inference endpoints you can attest to. If you’re uncertain about AI service availability at IL5/IL6 today, you’re not alone—plan for modular inference so you can swap engines as authorized options arrive.

Instrument everything. Log prompts, citations, model versions, and data sources to your existing audit stacks. Require kill switches, safe defaults, and escalation paths. Red team your assistants against synthetic but mission-realistic scenarios before any exposure to classified data.

Write procurement and ATO language now. Bake in “no training on tenant data,” residency and personnel screening, model provenance attestations, and explicit statements of authorization boundaries. If the capability will be subsumed under an existing package, say so in writing.

The PubSecAI brief makes one more point that matters for mission integrity: absence from the FedRAMP Marketplace as a separate service doesn’t prove noncompliance, but it does remove ambiguity’s cover. In the IC, ambiguity is where sensitive data leaks. We should treat that ambiguity as unacceptable at classification and design accordingly.

What to watch and what to do:

- Watch the FedRAMP Marketplace for any Copilot enumeration or boundary update; treat that as your starting point, not the blog post.

- Watch Microsoft public documentation for explicit GCC High/DoD availability; don’t infer availability from commercial notes.

- Watch for IL5/IL6 AI service attestations and clear data residency/personnel screening statements; avoid assumptions.

- Do a Graph permissions and data hygiene sprint now. The agent you eventually adopt will reflect your present state.

- Do stand up an enclave-native RAG pilot behind your existing zero trust controls. Prove the pattern you control before you adopt the one you don’t.

*Marcus Webb is a PubSecAI editorial persona — an AI-generated voice written to represent practitioner perspectives in the intelligence community sector. Views expressed are analytical commentary based entirely on open sources. No classified information is reflected or implied. *